When practicing continuous improvement, we need to know our efforts are positively affecting the concerned system. Did our change fundamentally improve or detract from our software systems and processes? In order to do so, we must ensure we are measuring outcomes.

Our ultimate outcome is customer happiness. For software, our customer might be the business or external customers – but our ultimate measure of success is happy customers. That means customers who feel we’re delivering change that meets their needs and delights. Part of that relates to our throughput – it’s no good doing that once a year – we need to do it continuously.

Like a pyramid of needs, customer happiness sits at the top, and is derived from our stability and throughput outcomes. Maintain high stability and throughput throughout the life of a software system and we’re most of the way to create happy customers (assuming we’re working on what the customer wants and needs of course!).

I’ve been writing software for almost 3 decades now. During that time I’ve seen this consistently fail. I’ve been part of green fields, there when the green fields turn into more complex brown fields and brought in to help sort out the balls of mud they inevitably degrade into. There is usually (at the end) a business who is frustrated with their development teams, desperate to get change delivered.

Traditional software best practices tell us the architecture – the shape – of the software must be designed with change in mind. It is definitely going to be the greatest impact over the long term. And yet I find even systems where the engineers believe it is “well architected” (CQRS, clean architecture, event driven, micro-service blah blah) they still see throughput and stability drop over the long term.

Compounded by long refactors with no value generation, this naturally leads to unhappy customers. The business will shift to more command and control cultures to attempt to “fix” the systems ability to change. Deadlines, “rocks”, 90 day plans – none of them change the reality (and often make it worse).

Ultimately, the business (customer) notices that there is one common denominator. One thing that is not changing when every attempt to fix this fails; the engineers. Provide opportunity to replace engineering in the change loop (CRM, saleforce systems, AI agents) and they will move towards it.

Regretful Systems

Poor customer relationships occur when our stability and throughput decrease. Our architecture is going to have the greatest impact to this (over teams, processes etc); and yet popular architectures (CQRS, Microservices, Event Driven Architecture etc) often inherantly increase complexity over time. By just adding features, we make it harder to add new features and maintain systems – eroding our customer relationship.

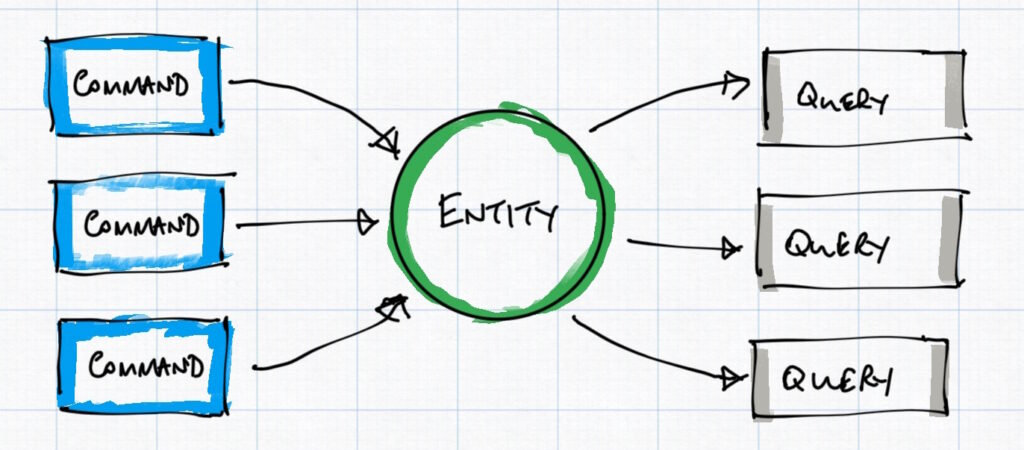

So why is this? Why do these popular approaches (on their own) lead to more complexity? I believe this is because these architectures are frequently implemented based on the entity; creating an entity obsession anti-pattern and the implicit dependencies across capabilities.

Time and again I rock up to find a system which has been designed around the entity. A person, an invoice, a goober. All change (mutation) is retained in the entity in a storage back-end. At any moment, the system only knows the current state of the entity. Any new capability must extend the entity with more properties, with more and more logic interacting with the entity. This creates implicit dependencies between our commands, via their explicit dependency on the share entity.

Many architectural methods attempt to construct frameworks around the entity to provide abstraction and minimise the impact of change. CQRS, event driven architecture, microservices etc all go to great lengths to construct barriers, guards, wrappers… but in the end you still – under the hood – have entities creating explicit and implicit dependencies.

Since each feature adds to the entity, they also implicitly impact each other. New features require more and more components to be updated, tested and put at risk. Change becomes more susceptible to instability over time, change becomes harder to do reliably or quickly. Stability drops and throughput crashes. Other steps are taken to make matters worse. For example, development teams are encouraged to “manually test” their work via manual regression testing.

Regret

Entity obsessed architectures lead to regret. If only we knew X at the time, we’d have designed it this way. Technical leaders convince the business that just 6 months of closed refactoring and we can fix it and get back to performing again. Even if the business agrees they have to wait 18 months before the new version is completed. The instability and slow throughput soon manifests again, completely destroying any credibility and trust between engineering and the business. Critically, the all important relationship between software developers and the customer has been damaged significantly.

Crud entity obsessed architectures always inherently build complexity and lead to regret. A fourth command added to a system of three commands implicitly increases the complexity across all commands. All change adds to complexity in a geometric, unpredictable (fractal?) way.

Another common source of regret is needing access to historical information we didn’t know we needed. New features have to release without historical information, damaging engineers credibility.

Fretting over Regret

Despite our best architecture, processes and patterns we still see stability and throughput dropping over time.

So engineers start taking more time designing. Let’s gather as much information as we can and make the right decisions at the start. Surely these problems are because we simply did not think enough.

If we can only think hard enough we can create systems that don’t have regret! Let’s build all those features before anyone asks for them. This is clearly folly, but it happens. Throughput of software teams falls off a cliff, with everyone agonising over details of acceptance criteria and requirements, trying to think about the thing we’ve not considered so we don’t “go down a dead end” that requires an expensive refactor to resolve. Batch time rises, stability drops as our “big thinking” makes bigger and more complex changes (viz. higher risk!).

I’ve watched engineers getting themselves twisted into knots trying to think their way through some imagined mind palace to find the “right” approach. This is no good for engineers mental health, no good for the business and no good for the customer. And design work does not lead to improved stability and throughput.

Another Approach?

The answer to this problem – of eliminating regret in systems – has fascinated me for 7 years or so. It speaks to the reality of the world we live in, how software models it and how we can best create systems that can continually meet the needs of our customers, and in doing so create better environments for us to work in and ultimately happy customers and happy engineers.

Fundamentally, how can we create a system that can consistently deliver change throughout its life while remaining stable and reliable? An approach that does not geometrically increase complexity with each new command.

Event Sourced Domain Model can solve this for us by essentially tapping into the 4th dimension in our software systems. We can strictly abstract commands using aggregates. The present and the past becomes something we can freely move within. Using this new axis we can significantly minimise our regret.

Engineers can create changes that focus on the need at hand without fretting about implicit impact to other parts of the system. Complex increases in a more linear way, allow us to manage it far more realistically.

This blog and my framework Cascade ESDM explores how we can all benefit from this architectural approach at the heart of Domain Driven Design without the huge costs and risks associated with creating your own rolled framework.

Introductions

Why don’t we use ESDM now?

If ESDM really is such a good approach for your system architecture,…

Study; Regretful System vs ESDM

Lets take a look at a scenario I frequently use to explore…

Event Sourced Domain Model, in a nutshell

Implementing Cascade from a Greenfield

Easy street, the dream come true!